It was 2022 and Cai, then 16, was scrolling on his phone. He says one of the first videos he saw on his social media feeds was of a cute dog. But then, it all took a turn.

He says “out of nowhere” he was recommended videos of someone being hit by a car, a monologue from an influencer sharing misogynistic views, and clips of violent fights. He found himself asking – why me?

Over in Dublin, Andrew Kaung was working as an analyst on user safety at TikTok, a role he held for 19 months from December 2020 to June 2022.

He says he and a colleague decided to examine what users in the UK were being recommended by the app’s algorithms, including some 16-year-olds. Not long before, he had worked for rival company Meta, which owns Instagram – another of the sites Cai uses.

When Andrew looked at the TikTok content, he was alarmed to find how some teenage boys were being shown posts featuring violence and pornography, and promoting misogynistic views, he tells BBC Panorama. He says, in general, teenage girls were recommended very different content based on their interests.

TikTok and other social media companies use AI tools to remove the vast majority of harmful content and to flag other content for review by human moderators, regardless of the number of views they have had. But the AI tools cannot identify everything.

Andrew Kaung says that during the time he worked at TikTok, all videos that were not removed or flagged to human moderators by AI – or reported by other users to moderators – would only then be reviewed again manually if they reached a certain threshold.

He says at one point this was set to 10,000 views or more. He feared this meant some younger users were being exposed to harmful videos. Most major social media companies allow people aged 13 or above to sign up.

TikTok says 99% of content it removes for violating its rules is taken down by AI or human moderators before it reaches 10,000 views. It also says it undertakes proactive investigations on videos with fewer than this number of views.

When he worked at Meta between 2019 and December 2020, Andrew Kaung says there was a different problem. He says that, while the majority of videos were removed or flagged to moderators by AI tools, the site relied on users to report other videos once they had already seen them.

He says he raised concerns while at both companies, but was met mainly with inaction because, he says, of fears about the amount of work involved or the cost. He says subsequently some improvements were made at TikTok and Meta, but he says younger users, such as Cai, were left at risk in the meantime.

Several former employees from the social media companies have told the BBC Andrew Kaung’s concerns were consistent with their own knowledge and experience.

Algorithms from all the major social media companies have been recommending harmful content to children, even if unintentionally, UK regulator Ofcom tells the BBC.

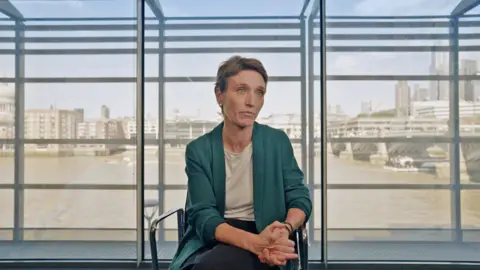

“Companies have been turning a blind eye and have been treating children as they treat adults,” says Almudena Lara, Ofcom’s online safety policy development director.

‘My friend needed a reality check’

TikTok told the BBC it has “industry-leading” safety settings for teens and employs more than 40,000 people working to keep users safe. It said this year alone it expects to invest “more than $2bn (£1.5bn) on safety”, and of the content it removes for breaking its rules it finds 98% proactively.

Meta, which owns Instagram and Facebook, says it has more than 50 different tools, resources and features to give teens “positive and age-appropriate experiences”.

Cai told the BBC he tried to use one of Instagram’s tools and a similar one on TikTok to say he was not interested in violent or misogynistic content – but he says he continued to be recommended it.

He is interested in UFC – the Ultimate Fighting Championship. He also found himself watching videos from controversial influencers when they were sent his way, but he says he did not want to be recommended this more extreme content.

“You get the picture in your head and you can’t get it out. [It] stains your brain. And so you think about it for the rest of the day,” he says.

Girls he knows who are the same age have been recommended videos about topics such as music and make-up rather than violence, he says.

Meanwhile Cai, now 18, says he is still being pushed violent and misogynistic content on both Instagram and TikTok.

When we scroll through his Instagram Reels, they include an image making light of domestic violence. It shows two characters side by side, one of whom has bruises, with the caption: “My Love Language”. Another shows a person being run over by a lorry.

Cai says he has noticed that videos with millions of likes can be persuasive to other young men his age.

For example, he says one of his friends became drawn into content from a controversial influencer – and started to adopt misogynistic views.

His friend “took it too far”, Cai says. “He started saying things about women. It’s like you have to give your friend a reality check.”

Cai says he has commented on posts to say that he doesn’t like them, and when he has accidentally liked videos, he has tried to undo it, hoping it will reset the algorithms. But he says he has ended up with more videos taking over his feeds.

So, how do TikTok’s algorithms actually work?

According to Andrew Kaung, the algorithms’ fuel is engagement, regardless of whether the engagement is positive or negative. That could explain in part why Cai’s efforts to manipulate the algorithms weren’t working.

The first step for users is to specify some likes and interests when they sign up. Andrew says some of the content initially served up by the algorithms to, say, a 16-year-old, is based on the preferences they give and the preferences of other users of a similar age in a similar location.

According to TikTok, the algorithms are not informed by a user’s gender. But Andrew says the interests teenagers express when they sign up often have the effect of dividing them up along gender lines.

The former TikTok employee says some 16-year-old boys could be exposed to violent content “right away”, because other teenage users with similar preferences have expressed an interest in this type of content – even if that just means spending more time on a video that grabs their attention for that little bit longer.

The interests indicated by many teenage girls in profiles he examined – “pop singers, songs, make-up” – meant they were not recommended this violent content, he says.

He says the algorithms use “reinforcement learning” – a method where AI systems learn by trial and error – and train themselves to detect behaviour towards different videos.

Andrew Kaung says they are designed to maximise engagement by showing you videos they expect you to spend longer watching, comment on, or like – all to keep you coming back for more.

The algorithm recommending content to TikTok’s “For You Page”, he says, does not always differentiate between harmful and non-harmful content.

According to Andrew, one of the problems he identified when he worked at TikTok was that the teams involved in training and coding that algorithm did not always know the exact nature of the videos it was recommending.

“They see the number of viewers, the age, the trend, that sort of very abstract data. They wouldn’t necessarily be actually exposed to the content,” the former TikTok analyst tells me.

That was why, in 2022, he and a colleague decided to take a look at what kinds of videos were being recommended to a range of users, including some 16-year-olds.

He says they were concerned about violent and harmful content being served to some teenagers, and proposed to TikTok that it should update its moderation system.

They wanted TikTok to clearly label videos so everyone working there could see why they were harmful – extreme violence, abuse, pornography and so on – and to hire more moderators who specialised in these different areas. Andrew says their suggestions were rejected at that time.

TikTok says it had specialist moderators at the time and, as the platform has grown, it has continued to hire more. It also said it separated out different types of harmful content – into what it calls queues – for moderators.

What happens when smartphones are taken away from kids for a week? With the help of two families and lots of remote cameras, Panorama finds out. And with calls for smartphones to be banned for children, Marianna Spring speaks to parents, teenagers and social media company insiders to investigate whether the content pushed to their feeds is harming them.

‘Asking a tiger not to eat you’

Andrew Kaung says that from the inside of TikTok and Meta it felt really difficult to make the changes he thought were necessary.

“We are asking a private company whose interest is to promote their products to moderate themselves, which is like asking a tiger not to eat you,” he says.

He also says he thinks children’s and teenagers’ lives would be better if they stopped using their smartphones.

But for Cai, banning phones or social media for teenagers is not the solution. His phone is integral to his life – a really important way of chatting to friends, navigating when he is out and about, and paying for stuff.

Instead, he wants the social media companies to listen more to what teenagers don’t want to see. He wants the firms to make the tools that let users indicate their preferences more effective.

“I feel like social media companies don’t respect your opinion, as long as it makes them money,” Cai tells me.

In the UK, a new law will force social media firms to verify children’s ages and stop the sites recommending porn or other harmful content to young people. UK media regulator Ofcom is in charge of enforcing it.

Almudena Lara, Ofcom’s online safety policy development director, says that while harmful content that predominantly affects young women – such as videos promoting eating disorders and self-harm – have rightly been in the spotlight, the algorithmic pathways driving hate and violence to mainly teenage boys and young men have received less attention.

“It tends to be a minority of [children] that get exposed to the most harmful content. But we know, however, that once you are exposed to that harmful content, it becomes unavoidable,” says Ms Lara.

Ofcom says it can fine companies and could bring criminal prosecutions if they do not do enough, but the measures will not come in to force until 2025.

TikTok says it uses “innovative technology” and provides “industry-leading” safety and privacy settings for teens, including systems to block content that may not be suitable, and that it does not allow extreme violence or misogyny.

Meta, which owns Instagram and Facebook, says it has more than “50 different tools, resources and features” to give teens “positive and age-appropriate experiences”. According to Meta, it seeks feedback from its own teams and potential policy changes go through robust process.

Source: BBC

The post ‘It stains your brain’: How social media algorithms show violence to boys appeared first on Ghanaian Times.

Read Full Story

Facebook

Twitter

Pinterest

Instagram

Google+

YouTube

LinkedIn

RSS